The virtual world sometimes feels like it’s trying to swallow the real one whole. Because of this, "AI labels" might be our best way forward right now.

Technology keeps amazing us every day. But this constant innovation also brings fear to people using social media. Many companies are rushing to build AI tools. They are creating apps and features that let anyone generate AI content with just a few clicks. When it’s that easy, everyone does it. This leads to a big problem: AI-made images and sounds look so real, it’s almost impossible to tell them apart from actual content. Who takes responsibility when things go wrong?

You’ve probably seen AI-generated pictures floating around social media. People create these using simple text prompts. The more details you give the AI, the more lifelike the images become. This causes real confusion about what’s true and what’s not. For example, some people use AI to create fake close-up pictures of themselves with artists. This harms the artists and misleads the public. Even worse, using someone’s photo to create fake nude images, known as deepfakes, can be against the law. These actions can even lead to serious lawsuits.

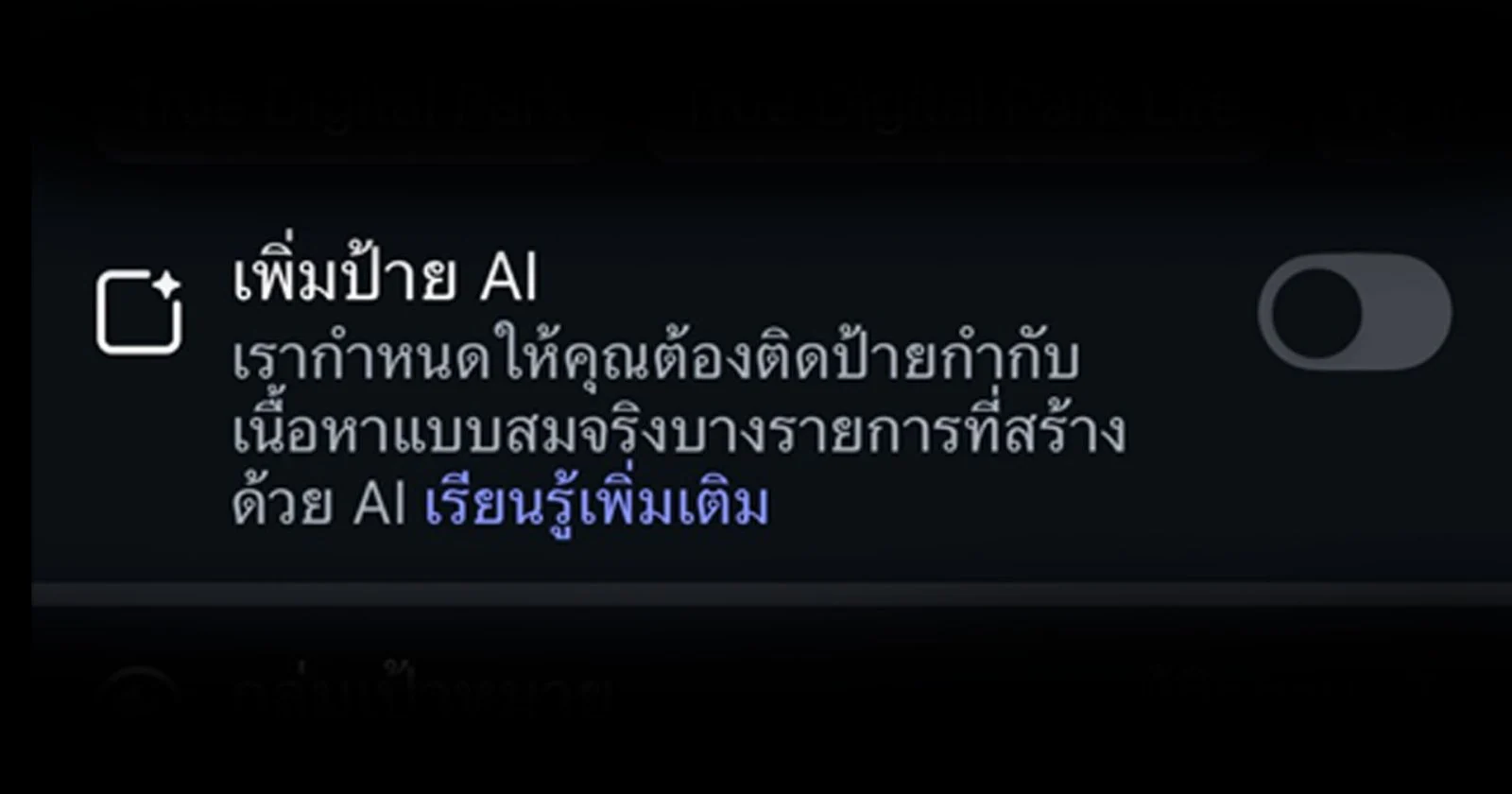

That’s why AI content labels are so important. They act like a clear warning sign. These labels help prevent misunderstandings by marking what’s real and what’s fake.

What Exactly Are AI Labels and Why Do We Need Them?

An AI label is a clear tag or symbol. It shows that content was made or changed by artificial intelligence.

Not long ago, AI started showing up more on social media platforms. Remember the excitement around AI chatbots? Then came tools that let users create images. Many saw this as a cool new opportunity. But for others, these images quickly became a mess that was hard to control.

Because of these growing issues, many top tech companies are now taking action. They are putting strict rules in place for AI content. Here are some examples:

- Meta announced in February 2024 that it would start adding “AI Info” labels. These labels appear on AI-generated content across Facebook, Instagram, and Threads. This helps users understand risks like misinformation or fake nude images of public figures.

- Pinterest added an “AI modified” label in May 2025. This tag appears in the bottom-left corner when you look at an image up close.

- TikTok updated its rules in April 2025. Users must now reveal if their content shows realistic scenes or has been changed by AI. The platform then displays labels like “Created by AI” or “Creator Labeled as AI-Generated.” This helps viewers tell real posts from AI-made ones. Also, in September 2025, TikTok Shop banned AI-generated content that copies people or is clearly edited.

Taking Control: Managing the AI We Create

AI labels are not just about technology. They touch on many social and legal issues.

- **Trust in Information:** When content sources are clear, it makes news, articles, and photos more believable. This helps everyone trust what they see and read.

- **Stopping Harmful Content:** Labels make it easier for platforms to find and remove damaging content. This includes deepfakes or false information.

- **Building Smart Users:** Labels are a key tool for teaching people to be media-savvy. They encourage users to question the content they see online.

AI now plays a huge role in our daily lives. So, having "AI labels" isn’t just a nice idea; it’s absolutely necessary. They help users sort out truth from fiction. Social platforms are taking these policies seriously, which shows a global move towards transparency. This step aims to protect users from the dangers that come with advanced technology.

AI labeling might not be a perfect answer yet. But it is a very important first step. It helps create awareness and shared responsibility in our digital world.